Texas-Sized Computer Finds Most Massive Black Hole in Galaxy M87

8 June 2009

Indicates Accepted Masses for Black Holes in Nearby Galaxies Too Low

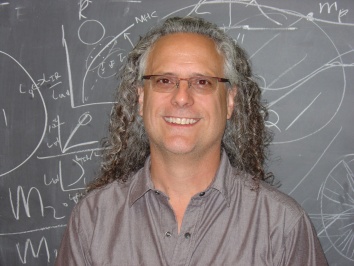

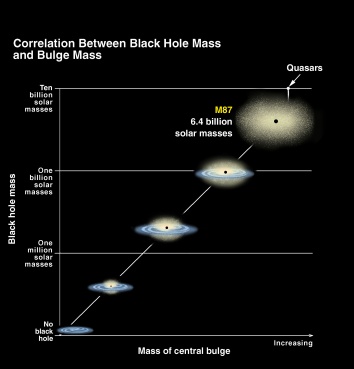

PASADENA, Calif. — Astronomers Karl Gebhardt (The University of Texas at Austin) and Jens Thomas (Max Planck Institute for Extraterrestrial Physics) have used new computer modeling techniques to discover that the black hole at the heart of M87, one the largest nearby giant galaxies, is two to three times more massive than previously thought. Weighing in at 6.4 billion times the Sun’s mass, it is the most massive black hole yet measured with a robust technique, and suggests that the accepted black hole masses in nearby large galaxies may be off by similar amounts. This has consequences for theories of how galaxies form and grow, and might even solve a long-standing astronomical paradox.

Gebhardt will detail these results in a press conference June 8 at 12 Noon PDT at the 214th meeting of the American Astronomical Society in Pasadena, Calif. They will be published later this summer in The Astrophysical Journal, in a paper by Gebhardt and Thomas.

To try to understand how galaxies form and grow, astronomers need to start with basic census information about today’s galaxies. What are they made of? How big are they? How much do they weigh? Astronomers measure this last category, galaxy mass, by clocking the speed of stars orbiting within the galaxy.

Studies of the total mass are important, Thomas said, but “the crucial point is to determine whether the mass is in the black hole, the stars, or the dark halo. You have to run a sophisticated model to be able to discover which is which. The more components you have, the more complicated the model is.”

To model M87, Gebhardt and Thomas used one of the world’s most powerful supercomputers, the Lonestar system at The University of Texas at Austin’s Texas Advanced Computing Center. Lonestar is a Dell Linux cluster with 5,840 processing cores and can perform 62 trillion floating-point operations per second. (Today’s top-of-the-line laptop computer has two cores and can perform up to 10 billion floating-point operations per second.)

Gebhardt and Jens’ model of M87 was more complicated than previous models of the galaxy, because in addition to modeling its stars and black hole, it takes into account the galaxy’s “dark halo,” a spherical region surrounding a galaxy that extends beyond its main visible structure, containing the galaxy’s mysterious “dark matter.”

“In the past, we have always considered the dark halo to be significant, but we did not have the computing resources to explore it as well,” Gebhardt said. “We were only able to use stars and black holes before. Toss in the dark halo, it becomes too computationally expensive, you have to go to supercomputers.”

The Lonestar result was a mass for M87’s black hole several times what previous models have found. “We did not expect it at all,” Gebhardt said. He and Jens simply wanted to test their model on “the most important galaxy out there,” he said.

Extremely massive and conveniently nearby (in astronomical terms), M87 was one of the first galaxies suggested to harbor a central black hole nearly three decades ago. It also has an active jet shooting light out the galaxy’s core as matter swirls closer to the black hole, allowing astronomers to study the process by which black holes attract matter. All of these factors make M87 the “the anchor for supermassive black hole studies,” Gebhardt said.

These new results for M87, together with hints from other recent studies and his own recent telescope observations (publications in preparation), lead him to suspect that all black hole masses for the most massive galaxies are underestimated.

That conclusion “is important for how black holes relate to galaxies,” Thomas said. “If you change the mass of the black hole, you change how the black hole relates to the galaxy.” There is a tight relation between the galaxy and its black hole which had allowed researchers to probe the physics of how galaxies grow over cosmic time. Increasing the black hole masses in the most massive galaxies will cause this relation to be re-evaluated.

Higher masses for black holes in nearby galaxies also could solve a paradox concerning the masses of quasars — active black holes at the centers of extremely distant galaxies, seen at a much earlier cosmic epoch. Quasars shine brightly as the material spiraling in, giving off copious radiation before crossing the event horizon (the region beyond which nothing — not even light — can escape).

“There is a long-standing problem in that quasar black hole masses were very large — 10 billion solar masses,” Gebhardt said. “But in local galaxies, we never saw black holes that massive, not nearly. The suspicion was before that the quasar masses were wrong,” he said. But “if we increase the mass of M87 two or three times, the problem almost goes away.”

Today’s conclusions are model-based, but Gebhardt also has made new telescope observations of M87 and other galaxies using new powerful instruments on the Gemini North Telescope and the European Southern Observatory’s Very Large Telescope. He said these data, which will be submitted for publication soon, support the current model-based conclusions about black hole mass.

For future telescope observations of galactic dark haloes, Gebhardt notes that a relatively new instrument at The University of Texas at Austin’s McDonald Observatory is perfect. “If you need to study the halo to get the black hole mass, there’s no better instrument than VIRUS-P,” he said. The instrument is a spectrograph. It separates the light from astronomical objects into its component wavelengths, creating a signature that can be read to find out an object’s distance, speed, motion, temperature, and more.

VIRUS-P is good for halo studies because it can take spectra over a very large area of sky, allowing astronomers to reach the very low light levels at large distances from the galaxy center where the dark halo is dominant. It is a prototype, built to test technology going into the larger VIRUS spectrograph for the forthcoming Hobby-Eberly Telescope Dark Energy Experiment (HETDEX).

— END —

Contacts:

Dr. Karl Gebhardt, The University of Texas at Austin (mobile 512-590-5206). More information about Dr. Gebhardt is available in our Experts Guide.

Dr. Jens Thomas, Max Planck Institute For Extraterrestrial Physics, Germany) (+49-89-30000-3714)

Rebecca Johnson, Press Officer, UT-Austin Astronomy Program (512-475-6763)

Faith Singer-Villalobos, Press Officer, UT-Austin Texas Advanced Computing Center (512-232-5771)